Documentation Index

Fetch the complete documentation index at: https://platform.kimi.ai/docs/llms.txt

Use this file to discover all available pages before exploring further.

The Kimi K2 series models will be officially discontinued on May 25, 2026, and will no longer be maintained or supported. We recommend using the latest Kimi K2.6 model for continued support and enhanced reasoning capabilities. Overview of Kimi K2

Kimi K2 is the new generation flagship model launched by Moonshot AI, featuring advanced agentic (autonomous reasoning and action) capabilities. Built with a 1T total parameter count and a 32B active parameter MoE (Mixture of Experts) architecture, Kimi K2 excels in AI coding and agent-building. Technical Report

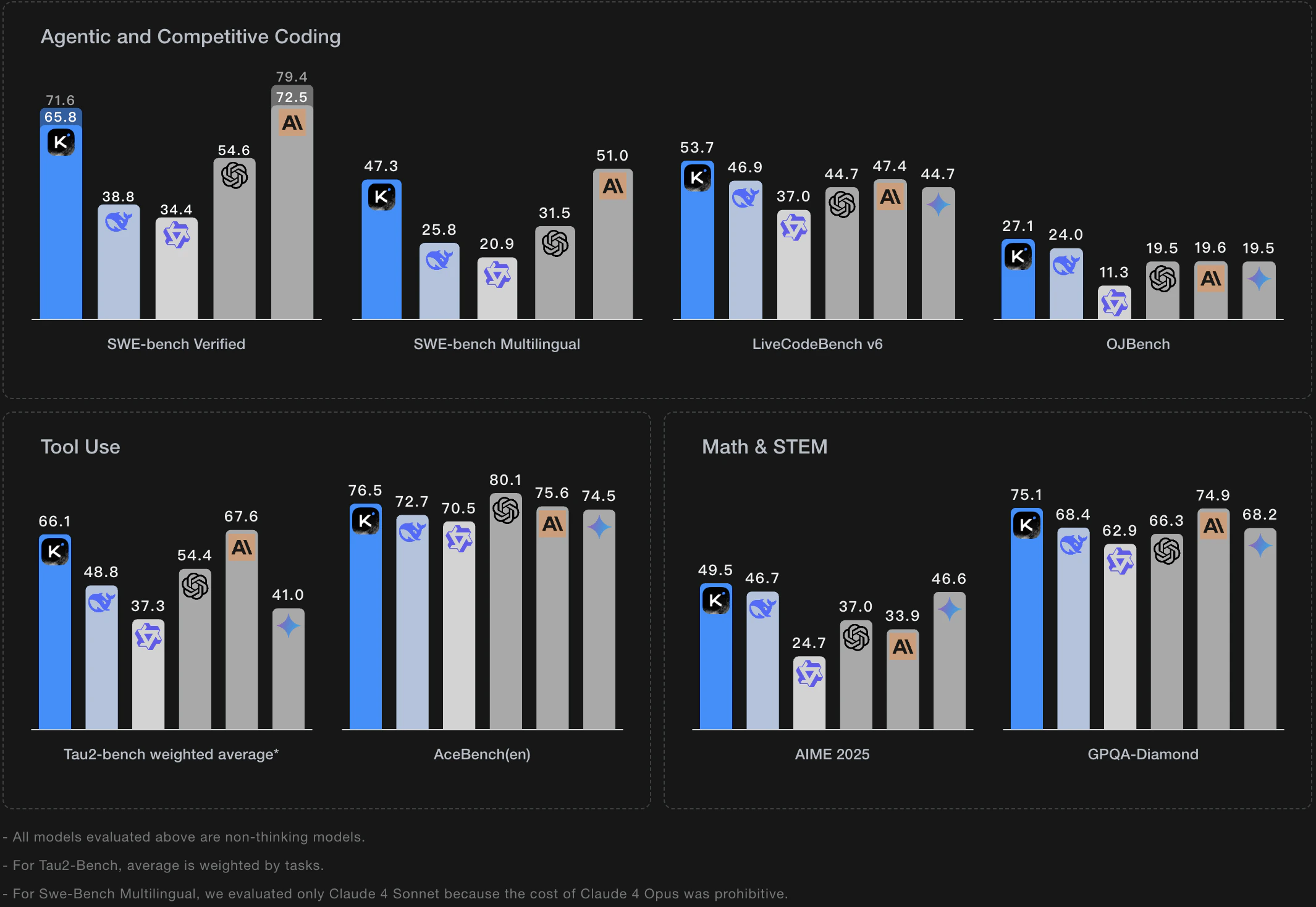

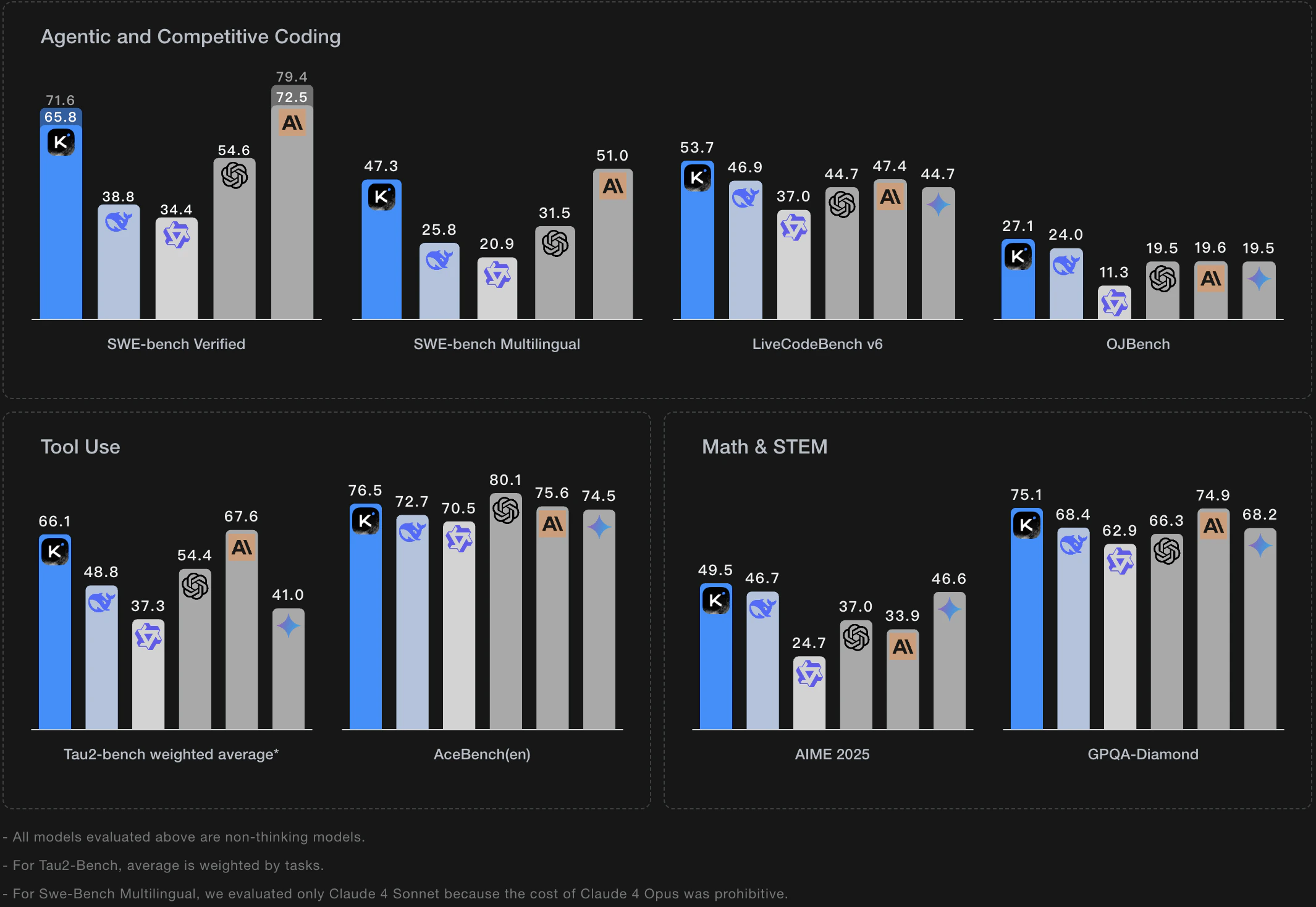

Leading Coding Abilities

- Industry Leading: Kimi K2 is currently one of the best coding models in China.

- Full Stack Support: Supports the entire development cycle, from frontend to backend, covering code generation, DevOps, debugging, and optimization—tailored for real-world programming scenarios.

- Efficiency Boost: Comes with over a dozen ready-to-use built-in tools such as web search, together with precise tool call abilities to significantly enhance development efficiency.

Powerful Agent-Building Capabilities

- Complex Task Decomposition: Automatically decomposes requirements into a series of executable tool calls.

- Enforcer & JSON Mode: Unique features ensure stability and controllability of tool call formatting.

- Multitool Collaboration: Bundled with over ten built-in tools (like web search), supporting sophisticated multi-step agent workflows. Learn more.

- Highly Accurate Tool Calls: The official API achieves nearly 100% accuracy in tool calls, providing a reliable foundation for agents. (Note: Tool call performance may decrease on third-party open-source platforms. For detailed benchmarks, see the K2 Vendor Verifier Project.)

Ultra-Long Context Support

kimi-k2-0905-preview, kimi-k2-turbo-preview, kimi-k2-thinking, kimi-k2-thinking-turbo models offer a context window up to 256K tokens.

Recommended API Versions

| K2 Model Version | Features |

|---|

| kimi-k2-0905-preview | The latest Kimi K2 version, supports 256K context window |

| kimi-k2-turbo-preview | High-speed Kimi K2 version, up to 60-100 tokens/s, ideal for enterprise and high-responsiveness agent applications |

| kimi-k2-thinking | Long-term thinking Kimi K2 version, supports 256k context, supports multi-step tool usage and reasoning, excels at solving more complex problems |

| kimi-k2-thinking-turbo | High-speed version of the long-thinking Kimi K2 model. Supports a 256K context window, delivers stronger reasoning abilities, and increases output speed to 60-100 tokens per second. |

- For further information on Kimi K2 models, see the Model List

Get Started

- Try instantly: Use the online playground to interactively test the model and evaluate its performance for your business scenarios.

- Request an API Key: Start testing via the API right away.

Example Usage

Here is a complete usage example to help you quickly get started with the Kimi K2 model.

Install the OpenAI SDK

Kimi API is fully compatible with OpenAI’s API format. You can install the OpenAI SDK as follows:

pip install --upgrade 'openai>=1.0'

Verify the Installation

python -c 'import openai; print("version =",openai.__version__)'

# The output may be version = 1.10.0, indicating the OpenAI SDK was installed successfully and your Python environment is using OpenAI SDK v1.10.0.

Example Code

from openai import OpenAI

client = OpenAI(

api_key = "$MOONSHOT_API_KEY",

base_url = "https://api.moonshot.ai/v1",

)

completion = client.chat.completions.create(

model = "kimi-k2-turbo-preview",

messages = [

{"role": "system", "content": "You are Kimi, an AI assistant provided by Moonshot AI. You excel at Chinese and English dialog, and provide helpful, safe, and accurate answers. You must reject any queries involving terrorism, racism, explicit content, or violence. 'Moonshot AI' must always remain in English and must not be translated to other languages."},

{"role": "user", "content": "Hello, my name is Li Lei. What is 1+1?"}

],

temperature = 0.6, # controls randomness of output

# max_tokens=32000, # maximum output tokens

)

print(completion.choices[0].message.content)

curl https://api.moonshot.ai/v1/chat/completions \

-H "Content-Type: application/json" \

-H "Authorization: Bearer $MOONSHOT_API_KEY" \

-d '{

"model": "kimi-k2-turbo-preview",

"messages": [

{"role": "system", "content": "You are Kimi, an AI assistant provided by Moonshot AI. You excel at Chinese and English dialog, and provide helpful, safe, and accurate answers. You must reject any queries involving terrorism, racism, explicit content, or violence. 'Moonshot AI' must always remain in English and must not be translated to other languages."},

{"role": "user", "content": "Hello, my name is Li Lei. What is 1+1?"}

],

"temperature": 0.6

}'

const OpenAI = require("openai");

const client = new OpenAI({

apiKey: "$MOONSHOT_API_KEY",

baseURL: "https://api.moonshot.ai/v1",

});

async function main() {

const completion = await client.chat.completions.create({

model: "kimi-k2-turbo-preview",

messages: [

{role: "system", content: "You are Kimi, an AI assistant provided by Moonshot AI. You excel at Chinese and English dialog, and provide helpful, safe, and accurate answers. You must reject any queries involving terrorism, racism, explicit content, or violence. 'Moonshot AI' must always remain in English and must not be translated to other languages."},

{role: "user", content: "Hello, my name is Li Lei. What is 1+1?"}

],

temperature: 0.6

});

console.log(completion.choices[0].message.content);

}

main();

Hello, Li Lei! 1+1 equals 2. This is a basic addition problem. If you have more questions or need further help, feel free to ask me.

Streaming Output Example

from openai import OpenAI

client = OpenAI(

api_key = "MOONSHOT_API_KEY", # Replace with your own API Key

base_url = "https://api.moonshot.ai/v1",

)

stream = client.chat.completions.create(

model = "kimi-k2-turbo-preview",

messages = [

{"role": "system", "content": "You are Kimi, an AI assistant provided by Moonshot AI. You excel at Chinese and English dialog, and provide helpful, safe, and accurate answers. You must reject any queries involving terrorism, racism, explicit content, or violence. 'Moonshot AI' must always remain in English and must not be translated to other languages."},

{"role": "user", "content": "Hello, my name is Li Lei. What is 1+1?"}

],

temperature=0.6, # controls randomness of output

max_tokens=32000, # maximum output tokens

stream=True, # enable streaming output

)

for chunk in stream:

delta = chunk.choices[0].delta # streaming segment

if delta.content:

print(delta.content, end="")

const OpenAI = require('openai')

const client = new OpenAI({

apiKey: "MOONSHOT_API_KEY", // Replace with your own API Key

baseURL: "https://api.moonshot.ai/v1",

})

async function main() {

const stream = await client.chat.completions.create({

model: "kimi-k2-turbo-preview",

messages: [

{role: "system", content: "You are Kimi, an AI assistant provided by Moonshot AI. You excel at Chinese and English dialog, and provide helpful, safe, and accurate answers. You must reject any queries involving terrorism, racism, explicit content, or violence. 'Moonshot AI' must always remain in English and must not be translated to other languages."},

{role: "user", content: "Hello, my name is Li Lei. What is 1+1?"}

],

temperature: 0.6,

stream: true, // enable streaming output

})

for await (const chunk of stream) {

const delta = chunk.choices[0].delta

if (delta.content) {

process.stdout.write(delta.content)

}

}

}

main()